business-science.io2024-12-08 07:59:342024-12-08T07:59:34-05:00https://www.business-science.ioBusiness Scienceinfo@business-science.ioCreate A Pandas Dataframe AI Agent With Generative AI, Python And OpenAI2025-12-08 06:00:002025-12-08T06:00:00-05:00https://www.business-science.io/genai-ml-tips/2025/12/08/data-analyst-ai-agent-genai-python<p>Hey guys, this is the first article in my <a href="https://learn.business-science.io/free-ai-tips">NEW GenAI / ML Tips Newsletter</a>. Today, we’re diving into the world of <strong>Generative AI</strong> and exploring how it can help companies automate common data science tasks. Specifically, we’ll learn how to <strong>create a Pandas dataframe agent</strong> that can answer questions about your dataset using Python, Pandas, LangChain, and OpenAI’s API. Let’s get started!</p>

<h3 id="table-of-contents">Table of Contents</h3>

<p>Here’s what you’ll learn in this article:</p>

<ul>

<li>Why Generative AI is Transforming Data Science</li>

<li>What is a Pandas Data Frame Agent?</li>

<li>Create a Pandas DataFrame Agent

<ul>

<li>Setting Up the Environment</li>

<li>Loading and Exploring the Dataset</li>

<li>Creating the Data Analysis Agent with LangChain</li>

<li>Interacting with the Agent</li>

<li>Visualizing the Results</li>

</ul>

</li>

<li><strong>Before You Go Any Further:</strong> <strong><a href="https://learn.business-science.io/free-ai-tips?el=website">Join the Free GenAI/ML Tips Newsletter to get the Data and Code so you can follow along</a></strong></li>

</ul>

<h1 id="this-is-what-you-are-making-today">This is what you are making today</h1>

<p>We’ll use this <strong>Generative AI Workflow</strong> to combine data (from CSVs or SQL databases) with a Pandas Data Frame Agent that helps us produce common analytics outputs like visualizations and reports.</p>

<p><img src="/assets/AI001_pandas_data_agent.jpg" alt="Make A Pandas Data Analysis Agent with Python and Generative AI" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/free-ai-tips?el=website" target="_blank">Get the Code (In the AI-Tip 001 Folder)</a></p>

<hr />

<!--

# SPECIAL ANNOUNCEMENT: How To Become A <u>6-Figure Business Scientist</u> (Even In A Recession) on August 30th

**What:** How To Become A 6-Figure Business Scientist (Even In A Recession)

**When:** Wednesday August 30th, 2pm EST

**How It Will Help You:** Data science in 2023 has changed. *The 10+ person data science team is out.* And the one-person Business Scientist is in. I'll show you how to become a 1-person data science team inside [my LIVE 6-figure business scientist masterclass](https://learn.business-science.io/registration-2-page?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-2-page?el=website)

-->

<!--

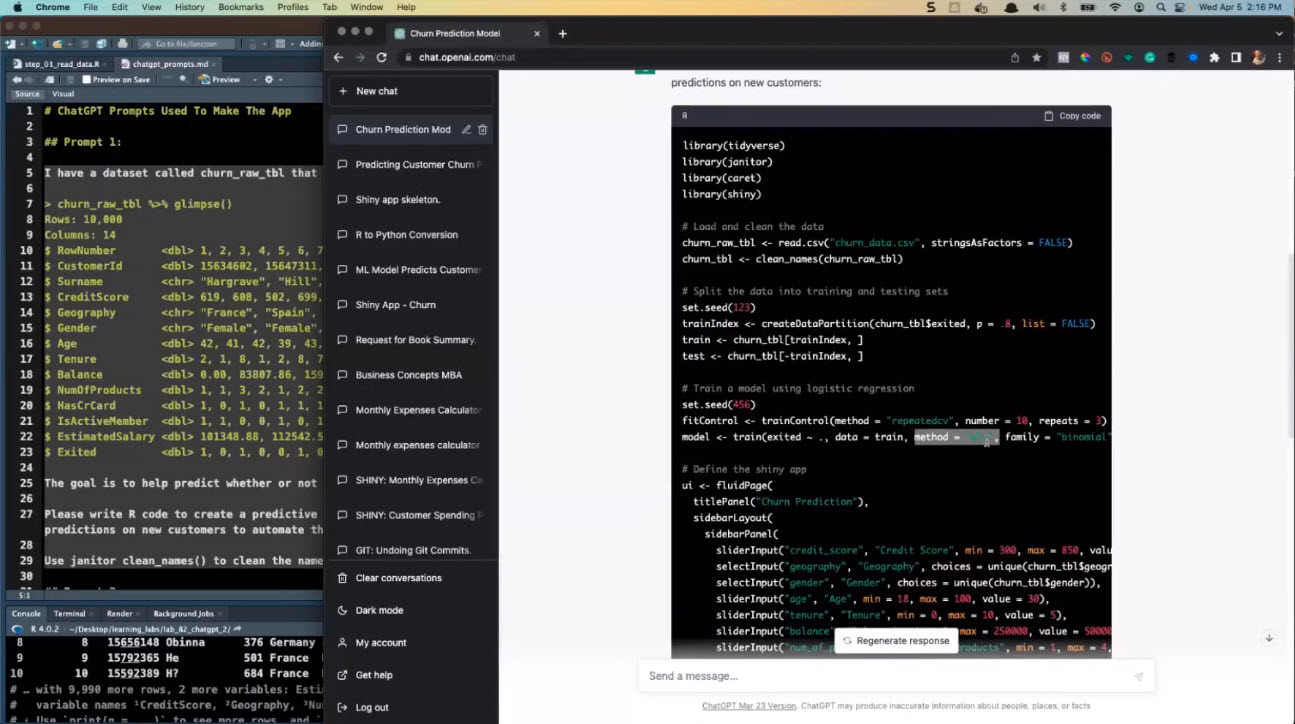

# SPECIAL ANNOUNCEMENT: ChatGPT for Data Scientists Workshop on December 11th

[Inside the workshop](https://learn.business-science.io/registration-chatgpt-2?el=website) I'll share how I built a Machine Learning Powered Production Shiny App with `ChatGPT` (extends this data analysis to an *insane* production app):

**What:** ChatGPT for Data Scientists

**When:** Wednesday December 11th, 2pm EST

**How It Will Help You:** Whether you are new to data science or are an expert, ChatGPT is changing the game. There's a ton of hype. But how can ChatGPT actually help you become a better data scientist and help you stand out in your career? I'll show you inside [my free chatgpt for data scientists workshop](https://learn.business-science.io/registration-chatgpt-2?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-chatgpt-2?el=website)

-->

<h1 id="special-announcement-ai-for-data-scientists-workshop-on-december-18th">SPECIAL ANNOUNCEMENT: AI for Data Scientists Workshop on December 18th</h1>

<p><a href="https://learn.business-science.io/ai-register">Inside the workshop</a> I’ll share how I built a SQL-Writing Business Intelligence Agent with Generative AI:</p>

<p><img src="/assets/how_to_create_a_business_intelligence_ai_copilot.jpg" alt="Generative AI for Data Scientists" /></p>

<p><strong>What:</strong> GenAI for Data Scientists</p>

<p><strong>When:</strong> Wednesday December 18th, 2pm EST</p>

<p><strong>How It Will Help You:</strong> Whether you are new to data science or are an expert, Generative AI is changing the game. There’s a ton of hype. But how can Generative AI actually help you become a better data scientist and help you stand out in your career? I’ll show you inside <a href="https://learn.business-science.io/ai-register">my free Generative AI for Data Scientists workshop</a>.</p>

<p><strong>Price:</strong> Does <strong>Free</strong> sound good?</p>

<p><strong>How To Join:</strong> <a href="https://learn.business-science.io/ai-register"><strong>👉 Register Here</strong></a></p>

<hr />

<h1 id="genaiml-tips-weekly">GenAI/ML-Tips Weekly</h1>

<p>This article is part of GenAI/ML Tips Weekly, a <a href="https://learn.business-science.io/free-ai-tips?el=website" target="_blank">weekly video tutorial</a> that shows you step-by-step how to do common Data Science and Generative AI coding tasks. Pretty cool, right?</p>

<p>Here is the link to get set up. 👇</p>

<ul>

<li><a href="https://learn.business-science.io/free-ai-tips?el=website" target="_blank">Sign up for our GenAI/ML Tips Newsletter and get the code.</a></li>

</ul>

<p><img src="/assets/AI001_get_the_code.jpg" alt="Get the code" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/free-ai-tips?el=website" target="_blank">Get the Code (In the GenAI/ML Tip 001 Folder)</a></p>

<h1 id="this-tutorial-is-available-in-video-9-minutes">This Tutorial is Available in Video (9-minutes)</h1>

<p>I have a <strong>9-minute video</strong> that walks you through setting up the Pandas Data Frame Agent and running data analysis with it. 👇</p>

<iframe width="100%" height="450" src="https://www.youtube.com/embed/2LDyRnUgLos" title="YouTube video player" frameborder="1" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowfullscreen=""></iframe>

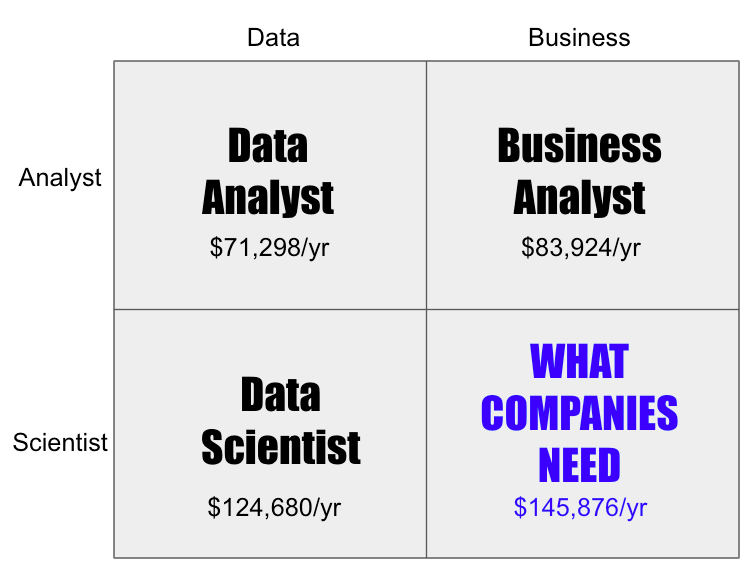

<h1 id="why-generative-ai-is-transforming-data-science">Why Generative AI is Transforming Data Science</h1>

<p>Generative AI, powered by models like OpenAI’s GPT series, is reshaping the data science landscape. These models can understand and generate human-like text, making it possible to interact with data in more intuitive ways. By integrating Generative AI into data science, you can:</p>

<ul>

<li><strong>Automate Data Insights:</strong> Quickly generate summaries and insights from complex datasets.</li>

<li><strong>Enhance Decision Making:</strong> Obtain answers to specific questions without manually sifting through data.</li>

<li><strong>Improve Accessibility:</strong> Make data science more accessible to non-technical stakeholders.</li>

</ul>

<p>Creating a <strong>Pandas dataframe agent</strong> combines the power of AI with data science, enabling you to unlock new possibilities in data exploration and interpretation from Natural Language.</p>

<h1 id="what-is-a-pandas-data-frame-agent">What is a Pandas Data Frame Agent?</h1>

<p>A <strong>Pandas Data Frame Agent</strong> automates common Pandas operations from Natural Language inputs.</p>

<p>It can be used to perform:</p>

<ul>

<li>GroupBy + Aggregate</li>

<li>Math calculations (that normal LLMs struggle with)</li>

<li>Filters</li>

<li>Pivots</li>

<li>Window calculations</li>

<li>Resampling (Time Series)</li>

<li>Binning</li>

<li>Log Transformations</li>

<li>Summary Statistics (Mean, Median, IQR, Min/Max, Count (frequency), etc)</li>

</ul>

<p>All from Natural Language prompts.</p>

<h1 id="make-a-pandas-data-frame-agent">Make A Pandas Data Frame Agent</h1>

<p>Let’s walk through the steps to create a Pandas data frame agent that can answer questions about a dataset using Python, OpenAI’s API, Pandas, and LangChain.</p>

<p><strong>Quick Reminder:</strong> You can get all of the code and datasets shown in a Python Script and Jupyter Notebook when you <a href="https://learn.business-science.io/free-ai-tips?el=website">join my GenAI/ML Tips Newsletter</a>.</p>

<p><strong>Code Location:</strong> <code class="language-plaintext highlighter-rouge">/001_pandas_dataframe_agent</code></p>

<h2 id="step-1-setting-up-the-python-environment">Step 1: Setting Up the Python Environment</h2>

<p>First, you’ll need to set up your Python environment and install the required libraries.</p>

<div class="language-python highlighter-rouge"><div class="highlight"><pre class="highlight"><code><span class="n">pip</span> <span class="n">install</span> <span class="n">openai</span> <span class="n">langchain</span> <span class="n">langchain_openai</span> <span class="n">langchain_experimental</span> <span class="n">pandas</span> <span class="n">plotly</span> <span class="n">pyyaml</span>

</code></pre></div></div>

<p>Next, import the libraries.</p>

<p><img src="/assets/AI001_libraries_1.jpg" alt="Libraries" /></p>

<p>Then run this to access our utility function, <code class="language-plaintext highlighter-rouge">parse_json_to_dataframe()</code>.</p>

<p><img src="/assets/AI001_utility_function.jpg" alt="Utility Function" /></p>

<p>The last part is to set up your OpenAI API Key. Make sure to get an API Key from OpenAI’s API website.</p>

<p><img src="/assets/AI001_openai_api_key.jpg" alt="OpenAI API Key" /></p>

<p><strong>Note:</strong> Replace ‘credentials.yml’ with the path to your YAML file containing the OpenAI API key or set the ‘OPENAI_API_KEY’ environment variable directly.</p>

<h2 id="step-2-loading-and-exploring-the-dataset">Step 2: Loading and Exploring the Dataset</h2>

<p>Load your dataset into a Pandas DataFrame. For this tutorial, we’ll use a sample customer data CSV file. But you could easily use any data that you can get into a Pandas Data Frame:</p>

<ul>

<li>SQL Database</li>

<li>CSV</li>

<li>Excel File</li>

</ul>

<p>Run this code to load the customer dataset:</p>

<p><img src="/assets/AI001_customer_dataset.jpg" alt="Load The Customer Dataset" /></p>

<p>This dataset contains customer information, including sales and geography data.</p>

<h2 id="step-3-create-the-pandas-data-analysis-agent-with-langchain">Step 3: Create the Pandas Data Analysis Agent with LangChain</h2>

<p>Initialize the language model and create the Pandas data analysis agent using LangChain.</p>

<p><img src="/assets/AI001_create_pandas_data_frame_agent.jpg" alt="Create The Pandas Data Frame Agent" /></p>

<p>This is what’s happening:</p>

<ul>

<li><code class="language-plaintext highlighter-rouge">ChatOpenAI</code>: Initializes the OpenAI language model.</li>

<li><code class="language-plaintext highlighter-rouge">create_pandas_dataframe_agent</code>: Creates an agent that can interact with the Pandas DataFrame.</li>

<li><code class="language-plaintext highlighter-rouge">agent_type</code>: Specifies the type of agent (using OpenAI functions).</li>

<li><code class="language-plaintext highlighter-rouge">suffix</code>: Instructs the agent to return results in JSON format for easy parsing.</li>

</ul>

<p><strong>Pro-Tip:</strong> The secret sauce is to use the <code class="language-plaintext highlighter-rouge">suffix</code> parameter to specify the output format. Under the hood, this appends the agent’s default prompt template with additional information that describes how to return the information.</p>

<h2 id="step-4-interacting-with-the-pandas-data-frame-agent">Step 4: Interacting with the Pandas Data Frame Agent</h2>

<p>Now, you can ask the agent questions about your data. Try running this code with a Natural Language analysis question:</p>

<blockquote>

<p>“What are the total sales by geography?”</p>

</blockquote>

<p><img src="/assets/AI001_invoke_the_agent.jpg" alt="Invoke the agent" /></p>

<p>The agent processes the query and returns a response.</p>

<p><img src="/assets/AI001_process_query.jpg" alt="Process Query" /></p>

<p>This is where Post Processing comes into play. Remember when I added the <code class="language-plaintext highlighter-rouge">suffix</code> parameter to return JSON. The Agent actually burries the JSON in a string.</p>

<p><img src="/assets/AI001_json_string.jpg" alt="JSON String" /></p>

<p>That’s OK, because I have created a handy little parsing tool that extracts the JSON from the string and converts it to a Pandas Data Frame for us.</p>

<p><img src="/assets/AI001_convert_json_to_pandas.jpg" alt="Convert JSON To Pandas" /></p>

<h2 id="step-5-visualizing-the-results">Step 5: Visualizing the Results</h2>

<p>With a pandas data frame we can then report the results. I’ll do this manually with Plotly, but a great challenge is to extend the code to create an AI agent that makes the visualization code and executes it automatically.</p>

<p><img src="/assets/AI001_visualization.jpg" alt="Data Visualization" /></p>

<p>This visualization provides a clear view of sales distribution across different geographical regions.</p>

<p><strong>Quick Reminder:</strong> You can get all of the code and datasets shown in a Python Script and Jupyter Notebook when you <a href="https://learn.business-science.io/free-ai-tips?el=website">join my GenAI/ML Tips Newsletter</a>.</p>

<h1 id="conclusion">Conclusion</h1>

<p>By integrating Generative AI with data science, you’ve created a powerful tool that can interact with your data in natural language. This Pandas data analysis agent simplifies the process of extracting insights and can help non-technical stakeholders automate common data manipulations to help them make data-driven decisions.</p>

<p><strong>But there’s so much more to learn in Generative AI and data science.</strong></p>

<p>If you’re excited to become a Generative AI Data Scientist with Python, then keep reading…</p>

<h1 id="become-a-generative-ai-data-scientist">Become A Generative AI Data Scientist</h1>

<p>The future of data science is AI / ML.</p>

<p>I’ve helped 6,107+ students learn data science and now I’m helping them become Generative AI Data Scientists, skilled in combining Generative AI / ML. With this system they have:</p>

<ul>

<li><strong>Landed Promotions to Manager of AI/ML Teams ($200,000+ Role)</strong></li>

<li><strong>Made Proof-Of-Concepts for Clients ($25,000+ Consulting Projects)</strong></li>

<li><strong>Grew their data science skills with Generative AI (Career Growth)</strong></li>

</ul>

<h1 id="heres-the-system-they-are-taking-to-become-generative-ai-data-scientists">Here’s the system they are taking to become Generative AI Data Scientists:</h1>

<p><img src="/assets/generative_ai_bootcamp.jpg" alt="Generative AI Bootcamp" /></p>

<p>This is a <strong>Live 8-Week Generative AI Bootcamp</strong> for Data Scientists that covers:</p>

<ul>

<li>

<p><strong>Week 1:</strong> Live Kickoff Clinic + Local LLM Training + AI Fast Track</p>

</li>

<li>

<p><strong>Week 2:</strong> Retrieval Augmented Generation (RAG)</p>

</li>

<li>

<p><strong>Week 3:</strong> Business Intelligence AI Copilot (SQL + Pandas Tools)</p>

</li>

<li>

<p><strong>Week 4:</strong> Customer Analytics Team (Multi-Agent Workflows)</p>

</li>

<li>

<p><strong>Week 5:</strong> Time Series Forecasting Team (Multi-Agent Machine Learning Workflows)</p>

</li>

<li>

<p><strong>Week 6:</strong> LLM Model Deployment AWS Bedrock</p>

</li>

<li>

<p><strong>Week 7:</strong> Fine-Tuning LLM Models AWS Bedrock</p>

</li>

<li>

<p><strong>Week 8:</strong> AI App Deployment With AWS Cloud</p>

</li>

</ul>

<p style="font-size: 36px;text-align: center;">

<a href="https://learn.business-science.io/generative-ai-bootcamp-enroll">

<strong>Enroll In The Next Cohort Here</strong><br /><small style="font-size:24px;">(And Become A Generative AI Data Scientist in 2025)</small>

</a>

</p>

Python-BloggersLearn-PythonPythonPandasOpenAILangChainGenAI-ML-TipsSupply Chain Analysis with R Using the planr Package2024-10-20 07:00:002024-10-20T07:00:00-04:00https://www.business-science.io/code-tools/2024/10/20/supply-chain-analysis-in-R-using-planr<p>Hey guys, welcome back to my <a href="https://learn.business-science.io/r-tips-newsletter?el=website">R-tips newsletter</a>. Supply chain management is essential in making sure that your company’s business runs smoothly. One of the key elements is <strong>managing inventory efficiently</strong>. Today, I’m going to show you how to estimate inventory and forecast inventory levels using the <code class="language-plaintext highlighter-rouge">planr</code> package in R. Let’s dive in!</p>

<h3 id="table-of-contents">Table of Contents</h3>

<p>Here’s what you’ll learn in this article:</p>

<ul>

<li><strong>Why Inventory Projections Are Crucial to Supply Chain Management</strong></li>

<li><strong>How to Use the <code class="language-plaintext highlighter-rouge">planr</code> Package to Project Inventories</strong>

<ul>

<li>Loading Supply Chain Data</li>

<li>Projecting Inventory Levels</li>

<li>Visualizing Demand Over Time</li>

<li>Creating Interactive Tables for Projected Inventories</li>

</ul>

</li>

<li><strong>Before You Go Any Further:</strong> <strong><a href="https://learn.business-science.io/r-tips-newsletter?el=website">Join the R-Tips Newsletter to get the Data and Code so you can follow along</a></strong></li>

</ul>

<p><img src="/assets/087_supply_chain_analytics_planr.jpg" alt="Supply Chain Analysis with R Using the planr Package" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 084 Folder)</a></p>

<hr />

<!--

# SPECIAL ANNOUNCEMENT: How To Become A <u>6-Figure Business Scientist</u> (Even In A Recession) on August 30th

**What:** How To Become A 6-Figure Business Scientist (Even In A Recession)

**When:** Wednesday August 30th, 2pm EST

**How It Will Help You:** Data science in 2023 has changed. *The 10+ person data science team is out.* And the one-person Business Scientist is in. I'll show you how to become a 1-person data science team inside [my LIVE 6-figure business scientist masterclass](https://learn.business-science.io/registration-2-page?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-2-page?el=website)

-->

<!--

# SPECIAL ANNOUNCEMENT: ChatGPT for Data Scientists Workshop on December 11th

[Inside the workshop](https://learn.business-science.io/registration-chatgpt-2?el=website) I'll share how I built a Machine Learning Powered Production Shiny App with `ChatGPT` (extends this data analysis to an *insane* production app):

**What:** ChatGPT for Data Scientists

**When:** Wednesday December 11th, 2pm EST

**How It Will Help You:** Whether you are new to data science or are an expert, ChatGPT is changing the game. There's a ton of hype. But how can ChatGPT actually help you become a better data scientist and help you stand out in your career? I'll show you inside [my free chatgpt for data scientists workshop](https://learn.business-science.io/registration-chatgpt-2?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-chatgpt-2?el=website)

-->

<h1 id="special-announcement-ai-for-data-scientists-workshop-on-december-18th">SPECIAL ANNOUNCEMENT: AI for Data Scientists Workshop on December 18th</h1>

<p><a href="https://learn.business-science.io/ai-register">Inside the workshop</a> I’ll share how I built a SQL-Writing Business Intelligence Agent with Generative AI:</p>

<p><img src="/assets/how_to_create_a_business_intelligence_ai_copilot.jpg" alt="Generative AI for Data Scientists" /></p>

<p><strong>What:</strong> GenAI for Data Scientists</p>

<p><strong>When:</strong> Wednesday December 18th, 2pm EST</p>

<p><strong>How It Will Help You:</strong> Whether you are new to data science or are an expert, Generative AI is changing the game. There’s a ton of hype. But how can Generative AI actually help you become a better data scientist and help you stand out in your career? I’ll show you inside <a href="https://learn.business-science.io/ai-register">my free Generative AI for Data Scientists workshop</a>.</p>

<p><strong>Price:</strong> Does <strong>Free</strong> sound good?</p>

<p><strong>How To Join:</strong> <a href="https://learn.business-science.io/ai-register"><strong>👉 Register Here</strong></a></p>

<hr />

<h1 id="r-tips-weekly">R-Tips Weekly</h1>

<p>This article is part of R-Tips Weekly, a <a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">weekly video tutorial</a> that shows you step-by-step how to do common R coding tasks. Pretty cool, right?</p>

<p>Here is the link to get set up. 👇</p>

<ul>

<li><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Sign up for our R-Tips Newsletter and get the code.</a></li>

</ul>

<p><img src="/assets/087_get_the_code.jpg" alt="Get the code" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 087 Folder)</a></p>

<h1 id="how-to-project-inventories-with-the-planr-package">How to Project Inventories with the <code class="language-plaintext highlighter-rouge">planr</code> Package</h1>

<h2 id="why-inventory-projections-are-crucial-to-supply-chain-management">Why Inventory Projections Are Crucial to Supply Chain Management</h2>

<p>Supply chain management is all about balancing <strong>supply and demand</strong> to ensure that inventory levels are optimized. Overestimating demand leads to excess stock, while underestimating it causes shortages. <strong>Accurate inventory projections</strong> allow businesses to plan ahead, make data-driven decisions, and avoid costly errors like over-buying inventory or getting into a stock-outage and having no inventory to meet demand.</p>

<h2 id="enter-the-planr-package">Enter the <code class="language-plaintext highlighter-rouge">planr</code> Package</h2>

<p>The <code class="language-plaintext highlighter-rouge">planr</code> package <a href="https://github.com/nguyennico/planr">simplifies inventory management</a> by projecting future inventory levels based on supply, demand, and current stock levels.</p>

<p><img src="/assets/087_planr_github.jpg" alt="Planr Github" /></p>

<h1 id="supply-chain-analysis-with-planr">Supply Chain Analysis with <code class="language-plaintext highlighter-rouge">planr</code></h1>

<p>Let’s take a look at how to use <code class="language-plaintext highlighter-rouge">planr</code> to optimize your supply chain. We’ll go through a quick tutorial to get you started using <code class="language-plaintext highlighter-rouge">planr</code> to project and manage inventories.</p>

<h2 id="step-1-load-libraries-and-data">Step 1: Load Libraries and Data</h2>

<p>First, you need to install the required packages and load the libraries. Run this code:</p>

<p><img src="/assets/087_libraries_data.jpg" alt="Libraries" /></p>

<p><img src="/assets/087_data.jpg" alt="Data" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 087 Folder)</a></p>

<p>This data contains <strong>supply and demand information</strong> for various demand fulfillment units (DFUs) over a period of time.</p>

<ul>

<li><strong>Demand Fullfillment Unit (DFU):</strong> A product identifier, here labeled as “Item 000001” (there are 10 items total).</li>

<li><strong>Period:</strong> Monthly periods corresponding to supply and demand.</li>

<li><strong>Demand:</strong> Customers purchase and reduce on-hand inventory.</li>

<li><strong>Opening:</strong> An initial inventory of 6570 units in the first period for Item 000001.</li>

<li><strong>Supply:</strong> New supplies arriving in subsequent months.</li>

</ul>

<h2 id="step-2-visualizing-demand-over-time">Step 2: Visualizing Demand Over Time</h2>

<p>The first step in understanding supply chain performance is visualizing demand trends. We can use <code class="language-plaintext highlighter-rouge">timetk::plot_time_series()</code> to get a clear view of the demand fluctuations. Run this code:</p>

<p><img src="/assets/087_plot_code.jpg" alt="timetk::plot_time_series() code" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 087 Folder)</a></p>

<p>This code will produce a set of <strong>time series plots</strong> that show how demand changes over time for each DFU. By visualizing these trends, you can identify patterns and outliers that may impact your projections.</p>

<p><img src="/assets/087_demand_time_plot.jpg" alt="timetk plot time series plot" /></p>

<h2 id="step-3-projecting-inventory-levels">Step 3: Projecting Inventory Levels</h2>

<p>Once you have a good understanding of demand, the next step is to project your future inventory levels. The <code class="language-plaintext highlighter-rouge">planr::light_proj_inv()</code> function helps you do this. Run this code:</p>

<p><img src="/assets/087_light_proj_inv.jpg" alt="Light Inventory Projection" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 087 Folder)</a></p>

<p>This function takes in the DFU, Period, Demand, Opening stock, and Supply as inputs to <strong>project inventory levels over time by item</strong>. The output is a data frame that contains the projected inventories for each period and DFU.</p>

<h2 id="step-4-creating-an-interactive-table-for-projected-inventories">Step 4: Creating an Interactive Table for Projected Inventories</h2>

<p>To make your projections more interactive and accessible, you can create an interactive table using <code class="language-plaintext highlighter-rouge">reactable</code> and <code class="language-plaintext highlighter-rouge">reactablefmtr</code>. I’ve made a function to automate the process for you based on the <code class="language-plaintext highlighter-rouge">planr</code>’s awesome documentation. Run this code:</p>

<p><img src="/assets/087_interactive_table_code.jpg" alt="Interactive Table Code" /></p>

<p><img src="/assets/087_projected_inventory_table.jpg" alt="Projected Inventory Table" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 087 Folder)</a></p>

<p>This generates a <strong>beautiful interactive table</strong> where you can filter and sort the projected inventories. Interactive tables make it easier to analyze your data and share insights with your team.</p>

<h1 id="conclusion">Conclusion</h1>

<p>By using the <code class="language-plaintext highlighter-rouge">planr</code> package, you can <strong>project inventory levels</strong> with ease, helping you manage your supply chain more effectively. This leads to better decision-making, reduced stockouts, and lower carrying costs.</p>

<p><strong>But there’s more to mastering supply chain analysis in R.</strong></p>

<p>If you would like to <strong>grow your Business Data Science skills with R</strong>, then please read on…</p>

<h1 id="need-to-advance-your-business-data-science-skills">Need to advance your business data science skills?</h1>

<p>I’ve helped 6,107+ students learn data science for business from an elite business consultant’s perspective.</p>

<p>I’ve worked with Fortune 500 companies like S&P Global, Apple, MRM McCann, and more.</p>

<p>And I built a training program that gets my students life-changing data science careers (don’t believe me? <a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series/">see my testimonials here</a>):</p>

<h4 class="text-center">

6-Figure Data Science Job at CVS Health ($125K)<br /><div style="height:10px;"></div>

Senior VP Of Analytics At JP Morgan ($200K)<br /><div style="height:10px;"></div>

50%+ Raises & Promotions ($150K)<br /><div style="height:10px;"></div>

Lead Data Scientist at Northwestern Mutual ($175K)<br /><div style="height:10px;"></div>

2X-ed Salary (From $60K to $120K)<br /><div style="height:10px;"></div>

2 Competing ML Job Offers ($150K)<br /><div style="height:10px;"></div>

Promotion to Lead Data Scientist ($175K)<br /><div style="height:10px;"></div>

Data Scientist Job at Verizon ($125K+)<br /><div style="height:10px;"></div>

Data Scientist Job at CitiBank ($100K + Bonus)<br /><div style="height:10px;"></div>

</h4>

<h1 id="whenever-you-are-ready-heres-the-system-they-are-taking">Whenever you are ready, here’s the system they are taking:</h1>

<p><a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series">Here’s the system</a> that has gotten aspiring data scientists, career transitioners, and life long learners data science jobs and promotions…</p>

<p><img src="/assets/rtrack_what_theyre_doing_2.jpg" alt="What They're Doing - 5 Course R-Track" /></p>

<p style="font-size: 36px;text-align: center;">

<a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series">

<strong>Join My 5-Course R-Track Program Now!</strong><br /><small style="font-size:24px;">(And Become The Data Scientist You Were Meant To Be...)</small>

</a>

</p>

<p>P.S. - Samantha landed her NEW Data Science R Developer job at CVS Health (Fortune 500). <a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series">This could be you.</a></p>

<p><img src="/img/success_samantha_got_job.jpg" alt="Success Samantha Got The Job" /></p>

R-BloggersLearn-RRR-TipsplanrCode-ToolsTop 10 R Packages for Exploratory Data Analysis (EDA) (Bookmark this!)2024-10-03 12:33:002024-10-03T12:33:00-04:00https://www.business-science.io/code-tools/2024/10/03/top-10-r-packages-for-eda<p>Hey guys, welcome back to my <a href="https://learn.business-science.io/r-tips-newsletter?el=website">R-tips newsletter</a>. Today, I’m excited to share with you the <strong>Top 10 R Packages for Exploratory Data Analysis (EDA)</strong>. These packages will help you streamline your data analysis workflow and gain deeper insights into your datasets. Let’s dive in!</p>

<h3 id="table-of-contents">Table of Contents</h3>

<p>Here’s what you’re learning today:</p>

<ul>

<li>

<p><strong>Importance of Exploratory Data Analysis</strong></p>

</li>

<li><strong>Top 10 R Packages for EDA:</strong>

<ul>

<li><code class="language-plaintext highlighter-rouge">skimr</code></li>

<li><code class="language-plaintext highlighter-rouge">psych</code></li>

<li><code class="language-plaintext highlighter-rouge">corrplot</code></li>

<li><code class="language-plaintext highlighter-rouge">PerformanceAnalytics</code></li>

<li><code class="language-plaintext highlighter-rouge">GGally</code></li>

<li><code class="language-plaintext highlighter-rouge">DataExplorer</code></li>

<li><code class="language-plaintext highlighter-rouge">summarytools</code></li>

<li><code class="language-plaintext highlighter-rouge">SmartEDA</code></li>

<li><code class="language-plaintext highlighter-rouge">janitor</code></li>

<li><code class="language-plaintext highlighter-rouge">inspectdf</code></li>

</ul>

</li>

<li>

<p><strong>BONUS: 5 More Underrated EDA Libraries in R</strong></p>

</li>

<li><strong>Get the Code</strong>: <strong><a href="https://learn.business-science.io/r-tips-newsletter?el=website">Join the R-Tips Newsletter</a></strong> to get the code and stay updated.</li>

</ul>

<p><img src="/assets/086_top_10_r_packages_eda.jpg" alt="Analyze Your Data Faster with gt_summarytools()" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<hr />

<!--

# SPECIAL ANNOUNCEMENT: How To Become A <u>6-Figure Business Scientist</u> (Even In A Recession) on August 30th

**What:** How To Become A 6-Figure Business Scientist (Even In A Recession)

**When:** Wednesday August 30th, 2pm EST

**How It Will Help You:** Data science in 2023 has changed. *The 10+ person data science team is out.* And the one-person Business Scientist is in. I'll show you how to become a 1-person data science team inside [my LIVE 6-figure business scientist masterclass](https://learn.business-science.io/registration-2-page?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-2-page?el=website)

-->

<!--

# SPECIAL ANNOUNCEMENT: ChatGPT for Data Scientists Workshop on December 11th

[Inside the workshop](https://learn.business-science.io/registration-chatgpt-2?el=website) I'll share how I built a Machine Learning Powered Production Shiny App with `ChatGPT` (extends this data analysis to an *insane* production app):

**What:** ChatGPT for Data Scientists

**When:** Wednesday December 11th, 2pm EST

**How It Will Help You:** Whether you are new to data science or are an expert, ChatGPT is changing the game. There's a ton of hype. But how can ChatGPT actually help you become a better data scientist and help you stand out in your career? I'll show you inside [my free chatgpt for data scientists workshop](https://learn.business-science.io/registration-chatgpt-2?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-chatgpt-2?el=website)

-->

<h1 id="special-announcement-ai-for-data-scientists-workshop-on-december-18th">SPECIAL ANNOUNCEMENT: AI for Data Scientists Workshop on December 18th</h1>

<p><a href="https://learn.business-science.io/ai-register">Inside the workshop</a> I’ll share how I built a SQL-Writing Business Intelligence Agent with Generative AI:</p>

<p><img src="/assets/how_to_create_a_business_intelligence_ai_copilot.jpg" alt="Generative AI for Data Scientists" /></p>

<p><strong>What:</strong> GenAI for Data Scientists</p>

<p><strong>When:</strong> Wednesday December 18th, 2pm EST</p>

<p><strong>How It Will Help You:</strong> Whether you are new to data science or are an expert, Generative AI is changing the game. There’s a ton of hype. But how can Generative AI actually help you become a better data scientist and help you stand out in your career? I’ll show you inside <a href="https://learn.business-science.io/ai-register">my free Generative AI for Data Scientists workshop</a>.</p>

<p><strong>Price:</strong> Does <strong>Free</strong> sound good?</p>

<p><strong>How To Join:</strong> <a href="https://learn.business-science.io/ai-register"><strong>👉 Register Here</strong></a></p>

<hr />

<h1 id="r-tips-weekly">R-Tips Weekly</h1>

<p>This article is part of R-Tips Weekly, a <a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">weekly video tutorial</a> that shows you step-by-step how to do common R coding tasks. Pretty cool, right?</p>

<p>Here are the links to get set up. 👇</p>

<ul>

<li><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Sign up for our R-Tips Newsletter and get the code.</a></li>

<li><a href="https://youtu.be/57sLWdW3rWE">YouTube Tutorial</a></li>

</ul>

<h1 id="this-tutorial-is-available-in-video-12-minutes">This Tutorial is Available in Video (12-minutes)</h1>

<p>I have a 12-minute video that walks you through these top 10 R packages for EDA and how to use them in R. (These are the ones I use most commonly) 👇</p>

<iframe width="100%" height="450" src="https://www.youtube.com/embed/57sLWdW3rWE" title="YouTube video player" frameborder="1" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowfullscreen=""></iframe>

<h1 id="importance-of-exploratory-data-analysis">Importance of Exploratory Data Analysis</h1>

<p><strong>Exploratory Data Analysis (EDA)</strong> is a crucial step in any data science project. It helps you understand the underlying structure of your data, identify patterns, detect anomalies, and test hypotheses. EDA enables you to make informed decisions about data cleaning, feature selection, and model selection.</p>

<h1 id="top-10-r-packages-for-eda">Top 10 R Packages for EDA</h1>

<p>To make your EDA process more efficient and insightful, here are the top 10 R packages you should know. <a href="https://learn.business-science.io/r-tips-newsletter?el=website">Get the R code and dataset so you can follow along here.</a></p>

<h2 id="setup-the-eda-packages-and-dataset-in-r">Setup the EDA Packages and Dataset in R:</h2>

<p>First, make sure you install all of the R packages I’ll be demo-ing today. Then load the data set I’ll be using so you can reproduce the results. Run this code:</p>

<p><img src="/assets/086_libraries_data.jpg" alt="Libraries and Data" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="1-skimr-summary-of-the-dataset">1. skimr: Summary of the Dataset</h2>

<p><code class="language-plaintext highlighter-rouge">skimr</code> provides a convenient and elegant summary of your data. Run this code:</p>

<ul>

<li>I made a deeper writeup on <code class="language-plaintext highlighter-rouge">skimr</code>: <a href="https://www.business-science.io/code-tools/2021/03/09/data-quality-with-skimr.html">Get the deep-dive here.</a></li>

</ul>

<p><img src="/assets/086_skimr.jpg" alt="skimr summary of dataset" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="2-psych-descriptive-statistics">2. psych: Descriptive Statistics</h2>

<p>The <code class="language-plaintext highlighter-rouge">psych</code> package offers functions for psychological, psychometric, and personality research, including descriptive statistics. Run this code:</p>

<ul>

<li>We’ll use the <code class="language-plaintext highlighter-rouge">describe()</code> function.</li>

<li>I personally like to output tables, so optionally you can use <code class="language-plaintext highlighter-rouge">gt::gt()</code> to convert to a GT HTML table. (<a href="https://www.business-science.io/code-tools/2023/08/06/tables-in-r-from-excel.html">I made a deep dive on the GT R package here.</a>)</li>

</ul>

<p><img src="/assets/086_psyche_describe.jpg" alt="Get Descriptive Statistics with Psych" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="3-corrplot-correlation-matrix-visualization">3. corrplot: Correlation Matrix Visualization</h2>

<p><code class="language-plaintext highlighter-rouge">corrplot</code> visualizes correlation matrices using various correlation methods. There’s a ton of customizations you can do. Run this code:</p>

<p><img src="/assets/086_corrplot.jpg" alt="Correlation Matrix Visualization with Corrplot" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="4-performanceanalytics-correlation-matrix-with-scatterplots-and-histograms">4. PerformanceAnalytics: Correlation Matrix with Scatterplots and Histograms</h2>

<p><code class="language-plaintext highlighter-rouge">PerformanceAnalytics</code> provides advanced charts and statistical functions for financial analysis (I actually use PerformanceAnalytics inside my <code class="language-plaintext highlighter-rouge">tidyquant</code> package for easier financial analysis). But, most people have no idea it has an amazing <code class="language-plaintext highlighter-rouge">chart.Correlation()</code> function that is fast and awesome. Run this code:</p>

<p><img src="/assets/086_chart_correlation.jpg" alt="PeformanceAnalytics Chart Correlation" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="5-ggally-scatterplot-matrix-with-pairwise-relationships">5. GGally: Scatterplot Matrix with Pairwise Relationships</h2>

<p><code class="language-plaintext highlighter-rouge">GGally</code> extends ggplot2 by adding several functions to reduce the complexity of combining geometric objects. The <code class="language-plaintext highlighter-rouge">ggpairs()</code> function is one of my favorite functions for assessing Pairwise Relationships. So powerful. Run this code:</p>

<p><img src="/assets/086_ggally.jpg" alt="GGally Pairwise Relationships" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="6-dataexplorer-generate-a-full-eda-report">6. DataExplorer: Generate a Full EDA Report</h2>

<p><code class="language-plaintext highlighter-rouge">DataExplorer</code> automates the EDA process and generates comprehensive reports. Run this code:</p>

<ul>

<li>I did a Deeper Dive on Data Explorer (<a href="https://www.business-science.io/code-tools/2021/03/02/use-dataexplorer-for-EDA.html">Get my deep-dive here.</a>)</li>

</ul>

<p><img src="/assets/086_dataexplorer.jpg" alt="DataExplorer" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="7-summarytools-summary-table-for-the-dataset">7. summarytools: Summary Table for the Dataset</h2>

<p><code class="language-plaintext highlighter-rouge">summarytools</code> provides tools to neatly and quickly summarize data. Run this code:</p>

<ul>

<li>I did a deep dive on <code class="language-plaintext highlighter-rouge">summarytools</code> (<a href="https://www.business-science.io/code-tools/2024/09/15/summarytools.html">Get the deep dive here.</a>)</li>

<li>I’m a big fan of <code class="language-plaintext highlighter-rouge">gt</code> tables, so I converted <code class="language-plaintext highlighter-rouge">summarytools</code> to gt (<a href="https://www.business-science.io/code-tools/2024/09/22/gt-summarytools.html">get that article here.</a>)</li>

</ul>

<p><img src="/assets/086_summarytools.jpg" alt="Summarytools" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="8-smarteda-generate-a-detailed-eda-report-in-html">8. SmartEDA: Generate a Detailed EDA Report in HTML</h2>

<p><code class="language-plaintext highlighter-rouge">SmartEDA</code> creates automated EDA reports with detailed analyses. This is a newer package, but already I love it. Run this code:</p>

<p><img src="/assets/086_smarteda.jpg" alt="SmartEDA" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="9-janitor-frequency-table-for-a-categorical-variable">9. janitor: Frequency Table for a Categorical Variable</h2>

<p><code class="language-plaintext highlighter-rouge">janitor</code> helps with data cleaning tasks, including frequency tables. We’ll use <code class="language-plaintext highlighter-rouge">tabyl()</code> to create a frequency table and the <code class="language-plaintext highlighter-rouge">adorn_*</code> functions to modify the table. Run this code:</p>

<p><img src="/assets/086_janitor_tabyl.jpg" alt="Janitor Tabyl" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h2 id="10-inspectdf-visualize-missing-values-in-the-dataset">10. inspectdf: Visualize Missing Values in the Dataset</h2>

<p><code class="language-plaintext highlighter-rouge">inspectdf</code> provides tools to visualize data frames, including missing values and correlations. Run this code:</p>

<p><img src="/assets/086_inspectdf.jpg" alt="InspectDF" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h1 id="bonus-five-5-underrated-eda-libraries-in-r">Bonus: Five (5) Underrated EDA Libraries in R:</h1>

<p>I had to call it quits at 10. But here are 4 more up and coming EDA libraries that are underrated:</p>

<ol>

<li>

<p><strong>Radiant:</strong> A shiny app for creating reproducible business and data analytics reports. <a href="https://www.business-science.io/code-tools/2022/02/01/business-analytics-radiant.html">Get my radiant deep dive here.</a></p>

</li>

<li>

<p><strong>Correlationfunnel:</strong> I use this R package all the time for quick correlation anlaysis and detecting critical relationships. Full Disclosure: I authored this R package. (<a href="https://www.business-science.io/code-tools/2019/08/07/correlationfunnel.html">Get the introduction here.</a>)</p>

</li>

<li>

<p><strong>GWalkr:</strong> Like Tableau in R for $0. <a href="https://www.business-science.io/code-tools/2024/08/09/tableau-in-r-gwalkr.html">Get my GWalkR deep-dive here.</a></p>

</li>

<li>

<p><strong>Esquisse:</strong> Also like Tableau in R for $0. <a href="https://www.business-science.io/code-tools/2021/03/23/ggplot-code-with-tableau-esquisse.html">Get my Esquisse deep-dive here.</a></p>

</li>

<li>

<p><strong>Explore:</strong> A simple shiny app for quickly exploring data. <a href="https://www.business-science.io/code-tools/2022/09/23/explore-simplified-exploratory-data-analysis-eda-in-r.html">Get my explore deep-dive here.</a></p>

</li>

</ol>

<h1 id="want-the-full-r-code">Want the Full R Code?</h1>

<p>To get access to the full source code for this tutorial, subscribe to the <a href="https://learn.business-science.io/r-tips-newsletter?el=website">R-Tips Newsletter</a>. This code is available exclusively to subscribers!</p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 086 Folder)</a></p>

<h1 id="conclusion-enhance-your-data-analysis-workflow">Conclusion: Enhance Your Data Analysis Workflow</h1>

<p>By using these top 10 R packages for EDA, you can significantly enhance your exploratory data analysis workflow, gain deeper insights, and make data-driven decisions more effectively.</p>

<p><strong>But there’s more to becoming a data scientist.</strong></p>

<p>If you would like to <strong>grow your Business Data Science skills with R</strong>, then please read on…</p>

<h1 id="need-to-advance-your-business-data-science-skills">Need to advance your business data science skills?</h1>

<p>I’ve helped 6,107+ students learn data science for business from an elite business consultant’s perspective.</p>

<p>I’ve worked with Fortune 500 companies like S&P Global, Apple, MRM McCann, and more.</p>

<p>And I built a training program that gets my students life-changing data science careers (don’t believe me? <a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series/">see my testimonials here</a>):</p>

<h4 class="text-center">

6-Figure Data Science Job at CVS Health ($125K)<br /><div style="height:10px;"></div>

Senior VP Of Analytics At JP Morgan ($200K)<br /><div style="height:10px;"></div>

50%+ Raises & Promotions ($150K)<br /><div style="height:10px;"></div>

Lead Data Scientist at Northwestern Mutual ($175K)<br /><div style="height:10px;"></div>

2X-ed Salary (From $60K to $120K)<br /><div style="height:10px;"></div>

2 Competing ML Job Offers ($150K)<br /><div style="height:10px;"></div>

Promotion to Lead Data Scientist ($175K)<br /><div style="height:10px;"></div>

Data Scientist Job at Verizon ($125K+)<br /><div style="height:10px;"></div>

Data Scientist Job at CitiBank ($100K + Bonus)<br /><div style="height:10px;"></div>

</h4>

<h1 id="whenever-you-are-ready-heres-the-system-they-are-taking">Whenever you are ready, here’s the system they are taking:</h1>

<p><a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series">Here’s the system</a> that has gotten aspiring data scientists, career transitioners, and life long learners data science jobs and promotions…</p>

<p><img src="/assets/rtrack_what_theyre_doing_2.jpg" alt="What They're Doing - 5 Course R-Track" /></p>

<p style="font-size: 36px;text-align: center;">

<a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series">

<strong>Join My 5-Course R-Track Program Now!</strong><br /><small style="font-size:24px;">(And Become The Data Scientist You Were Meant To Be...)</small>

</a>

</p>

<p>P.S. - Samantha landed her NEW Data Science R Developer job at CVS Health (Fortune 500). <a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series">This could be you.</a></p>

<p><img src="/img/success_samantha_got_job.jpg" alt="Success Samantha Got The Job" /></p>

R-BloggersLearn-RRR-TipsEDACode-ToolsIntroducing gt_summarytools: Analyze Your Data Faster With R2024-09-22 07:00:002024-09-22T07:00:00-04:00https://www.business-science.io/code-tools/2024/09/22/gt-summarytools<p>Hey guys, welcome back to my <a href="https://learn.business-science.io/r-tips-newsletter?el=website">R-tips newsletter</a>. In today’s fast-paced data science environment, speeding up exploratory data analysis (EDA) is more critical than ever. This is where <code class="language-plaintext highlighter-rouge">gt_summarytools()</code> comes in. A new function I’ve developed, <code class="language-plaintext highlighter-rouge">gt_summarytools()</code>, combines the best features of <code class="language-plaintext highlighter-rouge">gt</code> and <code class="language-plaintext highlighter-rouge">summarytools</code>, allowing you to create detailed, interactive data summaries faster and with more flexibility than ever. Let’s go!</p>

<h3 id="table-of-contents">Table of Contents</h3>

<p>Here’s what you’re learning today:</p>

<ul>

<li>

<p><strong>Why Quick Data Analysis Matters</strong></p>

</li>

<li><strong>Introducing <code class="language-plaintext highlighter-rouge">gt_summarytools()</code></strong>:

<ul>

<li>Combining the Best of <code class="language-plaintext highlighter-rouge">gt</code> and <code class="language-plaintext highlighter-rouge">summarytools</code></li>

<li>Creating Summaries with <code class="language-plaintext highlighter-rouge">gt_summarytools()</code></li>

</ul>

</li>

<li><strong>Get the Code</strong>: <strong><a href="https://learn.business-science.io/r-tips-newsletter?el=website">Join the R-Tips Newsletter</a></strong> to get the code and stay updated.</li>

</ul>

<p><img src="/assets/085_introducing_gt_summarytools.jpg" alt="Analyze Your Data Faster with gt_summarytools()" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 085 Folder)</a></p>

<hr />

<!--

# SPECIAL ANNOUNCEMENT: How To Become A <u>6-Figure Business Scientist</u> (Even In A Recession) on August 30th

**What:** How To Become A 6-Figure Business Scientist (Even In A Recession)

**When:** Wednesday August 30th, 2pm EST

**How It Will Help You:** Data science in 2023 has changed. *The 10+ person data science team is out.* And the one-person Business Scientist is in. I'll show you how to become a 1-person data science team inside [my LIVE 6-figure business scientist masterclass](https://learn.business-science.io/registration-2-page?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-2-page?el=website)

-->

<!--

# SPECIAL ANNOUNCEMENT: ChatGPT for Data Scientists Workshop on December 11th

[Inside the workshop](https://learn.business-science.io/registration-chatgpt-2?el=website) I'll share how I built a Machine Learning Powered Production Shiny App with `ChatGPT` (extends this data analysis to an *insane* production app):

**What:** ChatGPT for Data Scientists

**When:** Wednesday December 11th, 2pm EST

**How It Will Help You:** Whether you are new to data science or are an expert, ChatGPT is changing the game. There's a ton of hype. But how can ChatGPT actually help you become a better data scientist and help you stand out in your career? I'll show you inside [my free chatgpt for data scientists workshop](https://learn.business-science.io/registration-chatgpt-2?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-chatgpt-2?el=website)

-->

<h1 id="special-announcement-ai-for-data-scientists-workshop-on-december-18th">SPECIAL ANNOUNCEMENT: AI for Data Scientists Workshop on December 18th</h1>

<p><a href="https://learn.business-science.io/ai-register">Inside the workshop</a> I’ll share how I built a SQL-Writing Business Intelligence Agent with Generative AI:</p>

<p><img src="/assets/how_to_create_a_business_intelligence_ai_copilot.jpg" alt="Generative AI for Data Scientists" /></p>

<p><strong>What:</strong> GenAI for Data Scientists</p>

<p><strong>When:</strong> Wednesday December 18th, 2pm EST</p>

<p><strong>How It Will Help You:</strong> Whether you are new to data science or are an expert, Generative AI is changing the game. There’s a ton of hype. But how can Generative AI actually help you become a better data scientist and help you stand out in your career? I’ll show you inside <a href="https://learn.business-science.io/ai-register">my free Generative AI for Data Scientists workshop</a>.</p>

<p><strong>Price:</strong> Does <strong>Free</strong> sound good?</p>

<p><strong>How To Join:</strong> <a href="https://learn.business-science.io/ai-register"><strong>👉 Register Here</strong></a></p>

<hr />

<h1 id="r-tips-weekly">R-Tips Weekly</h1>

<p>This article is part of R-Tips Weekly, a <a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">weekly video tutorial</a> that shows you step-by-step how to do common R coding tasks. Pretty cool, right?</p>

<p>Here are the links to get set up. 👇</p>

<ul>

<li><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Sign up for our R-Tips Newsletter and get the code.</a></li>

<li><a href="https://youtu.be/vQ6rU-SJonY">YouTube Tutorial</a></li>

</ul>

<h1 id="this-tutorial-is-available-in-video-9-minutes">This Tutorial is Available in Video (9-minutes)</h1>

<p>I have a 9-minute video that walks you through setting up <code class="language-plaintext highlighter-rouge">gt_summarytools()</code> in R and running your first exploratory data analysis with it. 👇</p>

<iframe width="100%" height="450" src="https://www.youtube.com/embed/vQ6rU-SJonY" title="YouTube video player" frameborder="1" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowfullscreen=""></iframe>

<h1 id="why-quick-data-analysis-matters">Why Quick Data Analysis Matters</h1>

<p>Exploratory Data Analysis is crucial for understanding your data, spotting trends, and detecting issues before diving into more advanced modeling techniques. But EDA can often be a time-consuming task if you’re not using the right tools.</p>

<p>That’s why I developed <code class="language-plaintext highlighter-rouge">gt_summarytools()</code> — to provide a faster, more efficient way to analyze your data using the power of <code class="language-plaintext highlighter-rouge">gt</code> and <code class="language-plaintext highlighter-rouge">summarytools</code>.</p>

<h1 id="introducing-gt_summarytools">Introducing gt_summarytools()</h1>

<p>If you’ve used <code class="language-plaintext highlighter-rouge">summarytools</code> for generating quick summaries and gt for creating visually appealing tables, you’ll love this new function. <code class="language-plaintext highlighter-rouge">gt_summarytools()</code> combines the two, allowing you to get the best of both worlds: concise, visually-rich summaries that are easy to generate and interpret.</p>

<p>Here’s one of the summaries we will create today with <code class="language-plaintext highlighter-rouge">gt_summarytools()</code>:</p>

<p><img src="/assets/085_customer_churn_summary.jpg" alt="GT summarytools" /></p>

<h2 id="combining-the-best-of-gt-and-summarytools">Combining the Best of gt and summarytools</h2>

<p>Here’s how it works:</p>

<ul>

<li>

<p><code class="language-plaintext highlighter-rouge">gt</code>: A package for creating publication-quality tables.</p>

</li>

<li>

<p><code class="language-plaintext highlighter-rouge">summarytools</code>: Known for its powerful <code class="language-plaintext highlighter-rouge">dfSummary()</code> function that provides a detailed overview of your data frame.</p>

</li>

<li>

<p><code class="language-plaintext highlighter-rouge">gt_summarytools()</code>: The perfect combination of the two, giving you a beautiful summary table with just a few lines of code.</p>

</li>

</ul>

<p>Let’s dive into a demo!</p>

<h1 id="code-demo-gt_summarytools-in-action">Code Demo: <code class="language-plaintext highlighter-rouge">gt_summarytools()</code> in Action</h1>

<p>I’ve developed this function to help you summarize your data faster and with more visual appeal. Let’s take a look at the new code demo, exclusively for <a href="https://learn.business-science.io/r-tips-newsletter?el=website">R-tips newsletter</a> subscribers.</p>

<p><img src="/assets/085_get_the_gt_summarytools_code.jpg" alt="Get the Code" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 085 Folder)</a></p>

<h2 id="step-1-load-libraries-and-data">Step 1: Load Libraries and Data</h2>

<p>Run this code to load the libraries and data:</p>

<p><img src="/assets/085_libraries_data.jpg" alt="Libraries and Data" /></p>

<h2 id="step-2-load-the-source-code-for-gt_summarytools">Step 2: Load the source code for <code class="language-plaintext highlighter-rouge">gt_summarytools()</code></h2>

<p>Next, source the code for the <code class="language-plaintext highlighter-rouge">gt_summarytools()</code> function (<a href="https://learn.business-science.io/r-tips-newsletter?el=website">it’s in the R-Tip 085 Folder</a>).</p>

<p>Run this code:</p>

<p><img src="/assets/085_source_code.jpg" alt="Source the gt_summarytools_code" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 085 Folder)</a></p>

<h2 id="step-3-run-gt_summarytools-on-the-datasets-provided">Step 3: Run <code class="language-plaintext highlighter-rouge">gt_summarytools()</code> on the datasets provided</h2>

<p>We can generate quick summaries using <code class="language-plaintext highlighter-rouge">gt_summarytools()</code>. Run this code:</p>

<p><img src="/assets/085_running_gt_summarytools.jpg" alt="Running gt_summarytools" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 085 Folder)</a></p>

<p>Here, we’re using the <code class="language-plaintext highlighter-rouge">gt_summarytools()</code> function to generate a beautiful table summarizing the churn data and stock data. These tables are not only functional but visually appealing, thanks to the <code class="language-plaintext highlighter-rouge">gt_theme_538()</code> theme, which adds a clean, professional style.</p>

<p>Let’s examine the output:</p>

<h3 id="customer-churn-summary">Customer Churn Summary:</h3>

<p><img src="/assets/085_customer_churn_summary.jpg" alt="Customer Churn Summary" /></p>

<h3 id="stock-data-summary">Stock Data Summary:</h3>

<p><img src="/assets/085_stock_data_summary.jpg" alt="Stock Data Summary" /></p>

<h1 id="want-the-full-code">Want the Full Code?</h1>

<p>To get access to the full source code for <code class="language-plaintext highlighter-rouge">gt_summarytools()</code>, subscribe to the <a href="https://learn.business-science.io/r-tips-newsletter?el=website">R-Tips Newsletter</a>. This code is available exclusively to subscribers!</p>

<p><img src="/assets/085_get_the_gt_summarytools_code.jpg" alt="Source Code" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 085 Folder)</a></p>

<h1 id="conclusion-save-time-and-analyze-faster">Conclusion: Save Time and Analyze Faster</h1>

<p>By leveraging <code class="language-plaintext highlighter-rouge">gt_summarytools()</code>, you can significantly speed up your data analysis workflow, all while generating better-looking tables. This function simplifies the process of data exploration, making it easier to gain insights and focus on decision-making and modeling.</p>

<p><strong>But there’s more to becoming a data scientist.</strong></p>

<p>If you would like to <strong>grow your Business Data Science skills with R</strong>, then please read on…</p>

<h1 id="need-to-advance-your-business-data-science-skills">Need to advance your business data science skills?</h1>

<p>I’ve helped 6,107+ students learn data science for business from an elite business consultant’s perspective.</p>

<p>I’ve worked with Fortune 500 companies like S&P Global, Apple, MRM McCann, and more.</p>

<p>And I built a training program that gets my students life-changing data science careers (don’t believe me? <a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series/">see my testimonials here</a>):</p>

<h4 class="text-center">

6-Figure Data Science Job at CVS Health ($125K)<br /><div style="height:10px;"></div>

Senior VP Of Analytics At JP Morgan ($200K)<br /><div style="height:10px;"></div>

50%+ Raises & Promotions ($150K)<br /><div style="height:10px;"></div>

Lead Data Scientist at Northwestern Mutual ($175K)<br /><div style="height:10px;"></div>

2X-ed Salary (From $60K to $120K)<br /><div style="height:10px;"></div>

2 Competing ML Job Offers ($150K)<br /><div style="height:10px;"></div>

Promotion to Lead Data Scientist ($175K)<br /><div style="height:10px;"></div>

Data Scientist Job at Verizon ($125K+)<br /><div style="height:10px;"></div>

Data Scientist Job at CitiBank ($100K + Bonus)<br /><div style="height:10px;"></div>

</h4>

<h1 id="whenever-you-are-ready-heres-the-system-they-are-taking">Whenever you are ready, here’s the system they are taking:</h1>

<p><a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series">Here’s the system</a> that has gotten aspiring data scientists, career transitioners, and life long learners data science jobs and promotions…</p>

<p><img src="/assets/rtrack_what_theyre_doing_2.jpg" alt="What They're Doing - 5 Course R-Track" /></p>

<p style="font-size: 36px;text-align: center;">

<a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series">

<strong>Join My 5-Course R-Track Program Now!</strong><br /><small style="font-size:24px;">(And Become The Data Scientist You Were Meant To Be...)</small>

</a>

</p>

<p>P.S. - Samantha landed her NEW Data Science R Developer job at CVS Health (Fortune 500). <a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series">This could be you.</a></p>

<p><img src="/img/success_samantha_got_job.jpg" alt="Success Samantha Got The Job" /></p>

R-BloggersLearn-RRR-Tipssummarytoolsgtgt_summarytoolsCode-ToolsHow to Analyze Your Data Faster With R Using summarytools2024-09-15 07:00:002024-09-15T07:00:00-04:00https://www.business-science.io/code-tools/2024/09/15/summarytools<p>Hey guys, welcome back to my <a href="https://learn.business-science.io/r-tips-newsletter?el=website">R-tips newsletter</a>. Getting quick insights into your data is absolutely critical to data understanding, predictive modeling, and production. But it can be challenging if you’re just getting started. Today, I’m going to show you how to <strong>analyze your data faster using the <code class="language-plaintext highlighter-rouge">summarytools</code> package in R</strong>. Let’s go!</p>

<h3 id="table-of-contents">Table of Contents</h3>

<p>Here’s what you’re learning today:</p>

<ul>

<li><strong>Why Quick Data Analysis is Important</strong></li>

<li><strong>How to Use <code class="language-plaintext highlighter-rouge">summarytools</code> to Summarize Your Data</strong>

<ul>

<li>Data Frame Summaries with <code class="language-plaintext highlighter-rouge">dfSummary()</code></li>

<li>Descriptive Statistics with <code class="language-plaintext highlighter-rouge">descr()</code></li>

<li>Frequency Tables with <code class="language-plaintext highlighter-rouge">freq()</code></li>

</ul>

</li>

<li><strong>Next Steps:</strong> <strong><a href="https://learn.business-science.io/r-tips-newsletter?el=website">Join the R-Tips Newsletter</a></strong> to get the code and stay updated.</li>

</ul>

<p><img src="/assets/084_analyze_your_data_faster_with_r.jpg" alt="Analyze Your Data Faster with R" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 084 Folder)</a></p>

<hr />

<!--

# SPECIAL ANNOUNCEMENT: How To Become A <u>6-Figure Business Scientist</u> (Even In A Recession) on August 30th

**What:** How To Become A 6-Figure Business Scientist (Even In A Recession)

**When:** Wednesday August 30th, 2pm EST

**How It Will Help You:** Data science in 2023 has changed. *The 10+ person data science team is out.* And the one-person Business Scientist is in. I'll show you how to become a 1-person data science team inside [my LIVE 6-figure business scientist masterclass](https://learn.business-science.io/registration-2-page?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-2-page?el=website)

-->

<!--

# SPECIAL ANNOUNCEMENT: ChatGPT for Data Scientists Workshop on December 11th

[Inside the workshop](https://learn.business-science.io/registration-chatgpt-2?el=website) I'll share how I built a Machine Learning Powered Production Shiny App with `ChatGPT` (extends this data analysis to an *insane* production app):

**What:** ChatGPT for Data Scientists

**When:** Wednesday December 11th, 2pm EST

**How It Will Help You:** Whether you are new to data science or are an expert, ChatGPT is changing the game. There's a ton of hype. But how can ChatGPT actually help you become a better data scientist and help you stand out in your career? I'll show you inside [my free chatgpt for data scientists workshop](https://learn.business-science.io/registration-chatgpt-2?el=website).

**Price:** Does **Free** sound good?

**How To Join:** [**👉 Register Here**](https://learn.business-science.io/registration-chatgpt-2?el=website)

-->

<h1 id="special-announcement-ai-for-data-scientists-workshop-on-december-18th">SPECIAL ANNOUNCEMENT: AI for Data Scientists Workshop on December 18th</h1>

<p><a href="https://learn.business-science.io/ai-register">Inside the workshop</a> I’ll share how I built a SQL-Writing Business Intelligence Agent with Generative AI:</p>

<p><img src="/assets/how_to_create_a_business_intelligence_ai_copilot.jpg" alt="Generative AI for Data Scientists" /></p>

<p><strong>What:</strong> GenAI for Data Scientists</p>

<p><strong>When:</strong> Wednesday December 18th, 2pm EST</p>

<p><strong>How It Will Help You:</strong> Whether you are new to data science or are an expert, Generative AI is changing the game. There’s a ton of hype. But how can Generative AI actually help you become a better data scientist and help you stand out in your career? I’ll show you inside <a href="https://learn.business-science.io/ai-register">my free Generative AI for Data Scientists workshop</a>.</p>

<p><strong>Price:</strong> Does <strong>Free</strong> sound good?</p>

<p><strong>How To Join:</strong> <a href="https://learn.business-science.io/ai-register"><strong>👉 Register Here</strong></a></p>

<hr />

<h1 id="r-tips-weekly">R-Tips Weekly</h1>

<p>This article is part of R-Tips Weekly, a <a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">weekly video tutorial</a> that shows you step-by-step how to do common R coding tasks. Pretty cool, right?</p>

<p>Here are the links to get set up. 👇</p>

<ul>

<li><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Sign up for our R-Tips Newsletter and get the code.</a></li>

<li><a href="https://youtu.be/GDDzwpFBROg">YouTube Tutorial</a></li>

</ul>

<h1 id="this-tutorial-is-available-in-video-6-minutes">This Tutorial is Available in Video (6-minutes)</h1>

<p>I have a 6-minute video that walks you through setting up <code class="language-plaintext highlighter-rouge">summarytools</code> in R and running your first exploratory data analysis with it. 👇</p>

<iframe width="100%" height="450" src="https://www.youtube.com/embed/GDDzwpFBROg" title="YouTube video player" frameborder="1" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowfullscreen=""></iframe>

<h1 id="how-to-analyze-your-data-faster-with-r-using-summarytools">How to Analyze Your Data Faster with R Using <code class="language-plaintext highlighter-rouge">summarytools</code></h1>

<h2 id="why-quick-data-analysis-is-important">Why Quick Data Analysis is Important</h2>

<p>In the fast-paced world of data science, getting quick insights into your data is crucial. It allows you to understand your data better, make informed decisions, and expedite the modeling process. However, performing exploratory data analysis (EDA) can be time-consuming if you’re not using the right tools.</p>

<h2 id="enter-summarytools">Enter <code class="language-plaintext highlighter-rouge">summarytools</code></h2>

<p>The <code class="language-plaintext highlighter-rouge">summarytools</code> package in R simplifies the process of data exploration by providing functions that generate comprehensive summaries of your data with minimal code.</p>

<p><img src="/assets/084_summarytools_github.jpg" alt="Summary Tools in R" /></p>

<p>Let’s dive into how you can use <code class="language-plaintext highlighter-rouge">summarytools</code> to speed up your data analysis.</p>

<h1 id="getting-started-with-summarytools">Getting Started with <code class="language-plaintext highlighter-rouge">summarytools</code></h1>

<p>I’ll show off some of the most important functionality in <code class="language-plaintext highlighter-rouge">summarytools</code>. I’ll use a customer churn dataset. <a href="https://learn.business-science.io/r-tips-newsletter?el=website">You can get all of the data and code here (it’s in the R-Tip 084 Folder)</a>.</p>

<h2 id="step-1-load-libraries-and-data">Step 1: Load Libraries and Data</h2>

<p>First, make sure you have the <code class="language-plaintext highlighter-rouge">summarytools</code> and <code class="language-plaintext highlighter-rouge">tidyverse</code> packages installed. Then load the libraries and data needed to complete this tutorial.</p>

<p><img src="/assets/084_libraries_data.jpg" alt="Libraries and Data" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Data and Code (In the R-Tip 084 Folder)</a></p>

<h2 id="step-2-data-frame-summaries-with-dfsummary">Step 2: Data Frame Summaries with <code class="language-plaintext highlighter-rouge">dfSummary()</code></h2>

<p>The <code class="language-plaintext highlighter-rouge">dfSummary()</code> function provides a detailed summary of your data frame, including:</p>

<ul>

<li>Data types</li>

<li>Missing values</li>

<li>Unique values</li>

<li>Basic statistics</li>

<li>Graphical representations</li>

</ul>

<p><strong>This code will open an interactive HTML report that summarizes your entire data frame</strong>, making it easy to spot anomalies or areas that need attention. Run this code:</p>

<p><img src="/assets/084_dfSummary.jpg" alt="dfSummary for Quick Data Summaries" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 084 Folder)</a></p>

<h2 id="step-3-descriptive-statistics-with-descr">Step 3: Descriptive Statistics with <code class="language-plaintext highlighter-rouge">descr()</code></h2>

<p><strong>To get descriptive statistics for your numeric variables</strong>, use the <code class="language-plaintext highlighter-rouge">descr()</code> function. This function provides detailed statistics such as:</p>

<ul>

<li>Mean</li>

<li>Median</li>

<li>Standard deviation</li>

<li>Inner quartile range (IQR)</li>

<li>Min</li>

<li>Max</li>

<li>Skewness</li>

<li>Kurtosis</li>

</ul>

<p>Run this code:</p>

<p><img src="/assets/084_descr.jpg" alt="descr for Quick Numeric Statistics" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 084 Folder)</a></p>

<h2 id="step-4-frequency-tables-with-freq">Step 4: Frequency Tables with <code class="language-plaintext highlighter-rouge">freq()</code></h2>

<p><strong>For categorical variables, the <code class="language-plaintext highlighter-rouge">freq()</code> function generates frequency tables that show the distribution of categories.</strong> This helps you understand the distribution and prevalence of each category within your data.</p>

<p>Run this code:</p>

<p><img src="/assets/084_freq.jpg" alt="freq for Frequency Statistics" /></p>

<p class="text-center date"><a href="https://learn.business-science.io/r-tips-newsletter?el=website" target="_blank">Get the Code (In the R-Tip 084 Folder)</a></p>

<h1 id="conclusions">Conclusions:</h1>

<p>By leveraging the <code class="language-plaintext highlighter-rouge">summarytools</code> package, you can perform a comprehensive exploratory data analysis with just a few lines of code. This not only saves you time but also enhances your understanding of the data, allowing you to make better-informed decisions. This leads to better predictive modeling, exploratory data analysis, and production deployment.</p>

<p><strong>But there’s more to becoming a data scientist.</strong></p>

<p>If you would like to <strong>grow your Business Data Science skills with R</strong>, then please read on…</p>

<h1 id="need-to-advance-your-business-data-science-skills">Need to advance your business data science skills?</h1>

<p>I’ve helped 6,107+ students learn data science for business from an elite business consultant’s perspective.</p>

<p>I’ve worked with Fortune 500 companies like S&P Global, Apple, MRM McCann, and more.</p>

<p>And I built a training program that gets my students life-changing data science careers (don’t believe me? <a href="https://university.business-science.io/p/5-course-bundle-machine-learning-web-apps-time-series/">see my testimonials here</a>):</p>

<h4 class="text-center">

6-Figure Data Science Job at CVS Health ($125K)<br /><div style="height:10px;"></div>

Senior VP Of Analytics At JP Morgan ($200K)<br /><div style="height:10px;"></div>

50%+ Raises & Promotions ($150K)<br /><div style="height:10px;"></div>

Lead Data Scientist at Northwestern Mutual ($175K)<br /><div style="height:10px;"></div>

2X-ed Salary (From $60K to $120K)<br /><div style="height:10px;"></div>

2 Competing ML Job Offers ($150K)<br /><div style="height:10px;"></div>